Somewhere in today’s call queue is a customer who will churn next month. Another is confused by a product your team just updated. A third has been transferred twice for a problem that should have been solved in sixty seconds. They’re all telling you this, clearly, in their own words, and every conversation is being recorded.

The problem isn’t the data. It’s that recording a conversation and understanding it are two entirely different things.

Operationalize conversational intelligence with Niveus

Most organizations have solved the first. They have transcripts, call logs, and storage. What they don’t have is a way to move from volume to meaning, to know, at scale, what customers are actually experiencing and why. With over 265 billion calls processed each year globally and digital channels growing at roughly 40% year over year, the gap between what organizations capture and what they actually learn keeps widening.

This blog explores how that changes, and what it takes to turn conversational data into decisions. Once conversational signals are reliably captured and analyzed, the next step is activating them operationally. Organizations reliably capture and analyze conversational signals; they activate them operationally.

What Is Google Conversational Analytics API & Core Signals

The Google Conversational Analytics API is a managed service designed to extract structured intelligence from conversational data. Unlike basic transcription services that convert speech to text, this API analyzes the semantic content, emotional trajectory, and behavioral patterns within conversations.

The API supports multiple modalities, including voice calls, chat transcripts, and hybrid interactions. It operates within the broader Google Cloud ecosystem and works alongside Speech-to-Text API, BigQuery, and Looker. Together, these services create an end-to-end conversation intelligence pipeline that transforms raw audio or text into actionable business insight.

Core Conversation Intelligence Signals

The Google Conversational Analytics API produces structured signals that transform unstructured conversations into analyzable data. These outputs fall into four primary categories:

- Sentiment Analysis: The API evaluates emotional tone at both conversation and turn level, helping teams detect dissatisfaction patterns and pinpoint where sentiment shifts occur within an interaction.

- Topic and Intent Extraction: Using natural language understanding, the API automatically identifies customer intents and discussion topics directly from conversation content. This reduces reliance on manual disposition codes and improves consistency in contact-reason reporting.

- Conversational Dynamics Metrics: Behavioral metrics such as agent talk-time distribution, silence duration, interruptions, and overtalk provide quantitative visibility into interaction quality and agent performance.

- Phrase and Keyword Detection: The API surfaces contextually significant phrases, including compliance statements, escalation triggers, and competitor mentions, enabling automated quality monitoring and review workflows.

- Confidence Scores: Each detected signal includes a confidence score, allowing downstream systems to filter or weight insights based on reliability.

Why These Signals Matter Architecturally

On their own, conversation transcripts are difficult to operationalize. Structured signals provide the semantic layer that makes interaction data measurable, comparable, and automation-ready across the enterprise.

By converting raw dialogue into normalized attributes, the API enables organizations to:

- Correlate sentiment trends with churn or CSAT

- Detect emerging product or service issues earlier

- Benchmark agent performance consistently

- Trigger downstream automation and case workflows

Architecturally, the Conversational Analytics API functions as the bridge between transcription pipelines and business intelligence systems. This transformation shifts conversations from passive records to active operational signals that can feed dashboards, machine learning models, and agentic workflows.

Processing Model

The Google Conversational Analytics API uses an asynchronous processing model designed for scale and pipeline decoupling. Conversations are submitted for analysis after ingestion and transcription, with results returned independently of the upstream capture flow.

Short chat interactions typically complete within seconds, while longer voice calls may take up to a minute, depending on duration and complexity.

Enterprises typically implement one of two patterns:

- Near-real-time processing for agent assist, live monitoring, and rapid escalation

- Batch post-conversation analysis for reporting, quality management, and model training

The service integrates with Google Cloud IAM and supports PII redaction workflows, allowing organizations to operationalize conversational intelligence while maintaining security, compliance, and least-privilege access controls.

Reference Architecture & Processing Flow

At a high level, the Google Conversational Analytics API sits between conversation ingestion and enterprise analytics. Raw interactions from voice, chat, and digital channels first pass through transcription pipelines, after which the API extracts structured intelligence signals such as sentiment, intent, and conversational dynamics.

These signals are persisted in analytics platforms like BigQuery and consumed by downstream systems, including BI dashboards, operational applications, and AI-driven workflows. This placement is deliberate: transcription converts speech to text, while the Conversational Analytics API adds the semantic and behavioral layer required to make conversation data analytically useful at scale.

The service operates asynchronously, allowing analysis to scale independently of ingestion workloads. This enables organizations to support both near-real-time operational use cases and large-scale batch analytics without tightly coupling pipeline components.

In enterprise environments, the API integrates with Google Cloud IAM and service accounts to enforce least-privilege access. Built-in support for PII redaction and masking helps organizations meet regulatory and compliance requirements while operationalizing conversational intelligence securely.

Enriching Enterprise Analytics with Conversational Signals

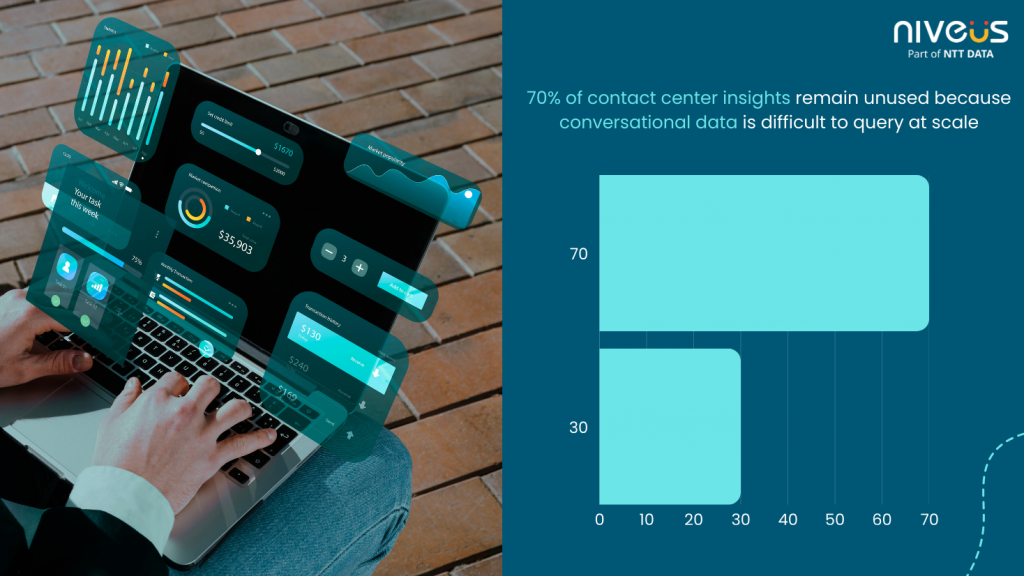

Industry benchmarks show that over 70% of contact center insights remain unused because conversational data is difficult to query at scale (Refer to fig 2). To address this, conversational signals are exported into BigQuery using schemas that separate conversation-level summaries from turn-level events. This structure enables efficient analysis of multi-turn interactions, such as tracking sentiment drift across a call or identifying intents that precede escalations.

Fig 1: Visual illustration of untapped conversational data in contact centers and the insight gap at scale.

These datasets power operational metrics, including sentiment trends by region or product, top intent drivers, agent talk-time ratios, and repeat-contact indicators. When surfaced in Looker dashboards, supervisors and leaders gain near-real-time visibility into performance. Many organizations also enrich CRM and operational datasets with these signals, improving case routing, prioritization, and customer context.

Extending Conversational Analytics with Agentic AI

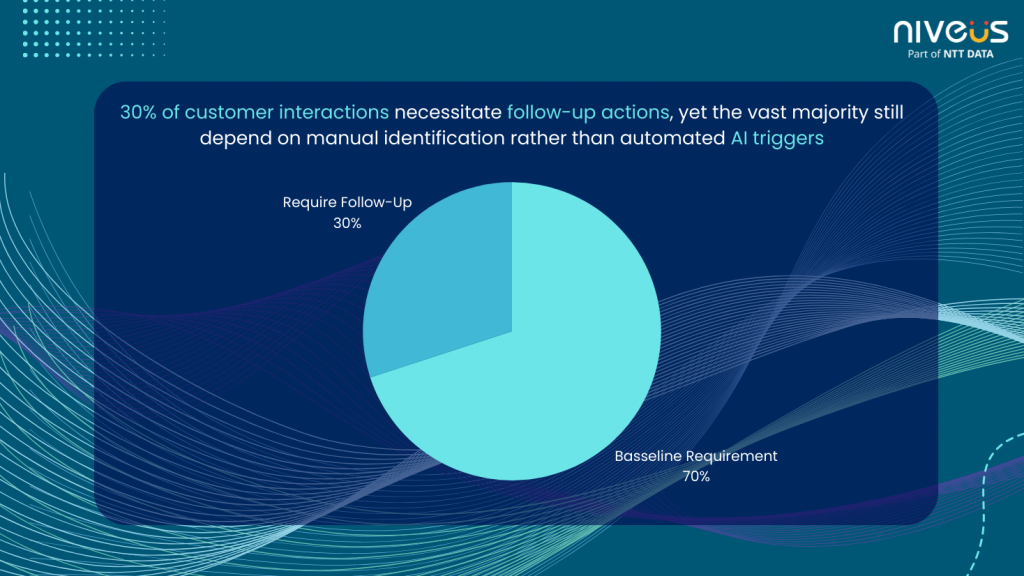

Contact center studies indicate that up to 30% of customer interactions require follow-up actions, yet most rely on manual identification (Refer to fig 2). Conversational Analytics enables event-driven triggers based on sentiment shifts, detected intents, or compliance phrases, allowing agentic AI systems to act in real time.

Fig 2: Visual Illustration of Customer interactions requiring follow-up still rely largely on manual identification rather than AI-driven triggers

Common patterns include agent assist recommendations, automated case creation, proactive customer follow-ups, and intelligent escalation. In regulated environments, human-in-the-loop workflows ensure oversight while preserving speed. As confidence grows, organizations move from static insight extraction to conversation-driven decision systems, where customer interactions directly activate operational and business workflows.

Conclusion: Designing for Conversation-Driven Decisions

Every customer conversation contains insight about demand, friction, risk, and opportunity. Yet without the right architecture, that insight stays buried in transcripts and call recordings. The difference between organizations that hear customers and those that learn from them is not volume; it’s how conversations are modeled, analyzed, and activated.

By embedding the Google Conversational Analytics API into the analytics stack, enterprises turn raw dialogue into structured intelligence that drives dashboards, automation, and agentic AI. Conversations stop being historical artifacts and become real-time inputs to decision-making systems. In a world of exploding interaction volumes, this shift from recording conversations to engineering learning systems is what defines the next generation of customer operations.